CHILDREN’S DATA PROTECTION UNDER INDIA’S DPDP ACT, 2023

By the SolvLegal Team

Published on: May 16, 2026, 3 p.m.

QUICK SUMMARY

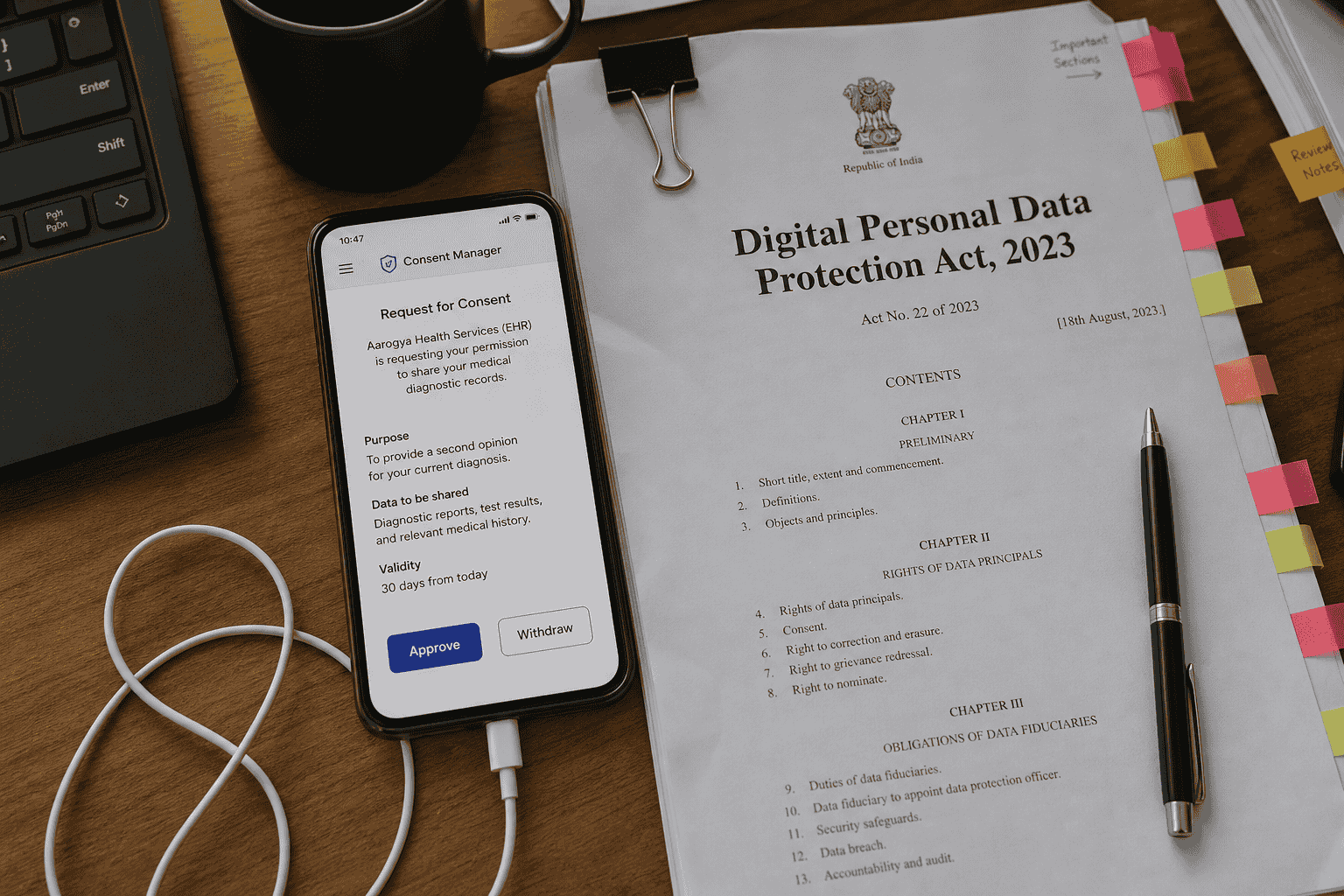

The Digital Personal Data Protection Act, 2023 (“DPDP Act”) introduces enhanced compliance obligations for businesses processing children’s personal data in India. Under the DPDP Act, a child is defined as any individual below 18 years of age, and organisations are required to obtain verifiable parental consent before collecting or processing a child’s personal data. The law also places significant restrictions on behavioural monitoring, tracking, profiling, and targeted advertising directed at children.

Businesses operating in sectors such as EdTech, gaming, healthcare, e-commerce, social media, and digital platforms that interact with minors must reassess their data collection and privacy practices to ensure compliance with the DPDP Act. This includes implementing age verification mechanisms, maintaining parental consent records, adopting child-friendly privacy notices, and strengthening cybersecurity safeguards. Failure to comply with children’s data protection obligations may expose businesses to substantial financial penalties, regulatory action, and reputational risks. As India’s data protection framework continues to evolve, organisations handling children’s data should adopt a proactive privacy-by-design approach and establish robust child data governance mechanisms.

INTRODCUTION

The rapid growth of digital platforms, online learning tools, gaming applications, social media platforms, and connected devices has significantly increased the volume of children’s personal data being collected and processed in India. As children become increasingly active in the digital ecosystem, concerns surrounding online tracking, behavioural profiling, targeted advertising, and misuse of personal information have also intensified. In response to these evolving privacy risks, India introduced specific safeguards for children under the Digital Personal Data Protection Act, 2023 (“DPDP Act”).

The DPDP Act establishes a comprehensive framework governing the processing of digital personal data in India and imposes enhanced obligations on organisations handling children’s data. Unlike several international privacy regimes that adopt lower age thresholds, the DPDP Act defines a child as any individual below 18 years of age. The legislation further requires organisations to obtain verifiable parental consent before processing children’s personal data and prohibits certain practices considered harmful to minors, including behavioural monitoring and targeted advertising.

These obligations are particularly relevant for businesses operating in sectors such as EdTech, gaming, healthcare, e-commerce, social media, OTT platforms, and mobile applications that may directly or indirectly collect children’s personal data. Organisations can no longer treat children’s privacy compliance as a secondary operational issue. Instead, businesses must now integrate child-specific privacy safeguards into their product design, consent management systems, advertising practices, and broader data governance frameworks.

The increasing regulatory focus on digital privacy and child safety also reflects a broader global trend toward stronger protections for minors online. Businesses operating across jurisdictions must therefore assess how India’s DPDP framework aligns with international privacy regimes such as the EU General Data Protection Regulation (“GDPR”) and the U.S. Children’s Online Privacy Protection Act (“COPPA”).

For the official text of the DPDP Act, businesses may refer to the Ministry of Electronics and Information Technology (MeitY) – Digital Personal Data Protection Act, 2023 and the official Gazette notification published by the Government of India.

WHO IS A CHILD UNDER THE DPDP ACT?

One of the most significant aspects of the Digital Personal Data Protection Act, 2023 (“DPDP Act”) is its broad definition of a “child.” Under Section 2(f) of the DPDP Act, a child means any individual who has not completed the age of 18 years. This definition has substantial compliance implications for businesses operating digital platforms, websites, mobile applications, and online services in India, particularly those catering to teenagers and young users.

Unlike certain international privacy frameworks that prescribe lower age thresholds for digital consent, the DPDP Act adopts a comparatively stricter approach. For instance, under the EU General Data Protection Regulation (“GDPR”), the default age for lawful consent in relation to information society services is 16 years, although member states may reduce it to 13 years. Similarly, the U.S. Children’s Online Privacy Protection Act (“COPPA”) primarily applies to children below the age of 13. India’s DPDP framework, however, extends enhanced privacy protections to all individuals below 18 years of age, thereby significantly expanding the scope of compliance obligations for businesses operating in India.

This higher age threshold creates practical and operational challenges for organisations that process user data at scale. Businesses that may not traditionally consider themselves “child-focused” platforms including e-commerce websites, gaming applications, social media platforms, online educational services, fintech applications, and healthcare platforms may still process children’s personal data if users below 18 years access their services. Consequently, organisations must carefully evaluate whether their platforms are likely to attract minors and whether additional child data protection measures are required under the DPDP Act.

The broad definition also increases the importance of implementing effective age verification and parental consent mechanisms. Businesses can no longer rely solely on generic user declarations or passive consent structures where minors may misrepresent their age. Instead, organisations should adopt reasonable and proportionate measures to identify child users and ensure compliance with statutory obligations relating to parental consent and restricted data processing activities.

India’s approach reflects a growing global regulatory trend toward strengthening online safety and privacy protections for minors. Regulators worldwide are increasingly scrutinising digital platforms that collect or monetise children’s data, particularly where such processing involves profiling, behavioural advertising, addictive design patterns, or algorithmic recommendations targeted toward younger users. As a result, businesses operating internationally should align their child privacy compliance programs with both Indian and global privacy standards.

WHAT CONSTITUTES CHILDREN’S PERSONAL DATA?

The Digital Personal Data Protection Act, 2023 (“DPDP Act”) adopts a broad interpretation of “personal data,” which means any data about an individual who is identifiable by or in relation to such data. When such information relates to an individual below 18 years of age, it becomes children’s personal data and attracts enhanced compliance obligations under the DPDP framework.

In practical terms, children’s personal data extends far beyond basic identifiers such as a child’s name or age. It may include information collected directly from children, indirectly through device usage, or automatically through digital tracking technologies. Businesses should therefore avoid assuming that only sensitive or educational records qualify as protected child data.

Examples of children’s personal data may include:

· Name, date of birth, gender, and contact details;

· School information and academic records;

· Parent or guardian information linked to the child;

· Photographs, videos, and voice recordings;

· Device identifiers, IP addresses, cookies, and online identifiers;

· Geolocation and real-time location tracking data;

· Browsing history and behavioural activity;

· Search history, gaming activity, and app usage patterns;

· Health-related information collected through healthcare or wellness applications;

· Biometric identifiers or facial recognition data;

· User-generated content uploaded by minors on digital platforms.

The scope of children’s personal data is particularly relevant for digital businesses that rely heavily on analytics, personalised recommendations, advertising technologies, and behavioural profiling. Many organisations may unknowingly process children’s personal data through cookies, SDKs, advertising trackers, or third-party integrations embedded within websites and mobile applications. As a result, businesses should conduct detailed data mapping exercises to identify whether any categories of children’s personal data are being collected, stored, shared, or processed across their digital infrastructure.

Importantly, the DPDP Act does not presently create a separate category of “sensitive personal data” as seen under earlier draft frameworks or international laws such as the GDPR. Nevertheless, businesses handling children’s data are expected to adopt higher standards of care given the increased regulatory emphasis on child privacy and online safety. This broad interpretation also has implications for artificial intelligence systems, recommendation algorithms, and ad-tech ecosystems that process user behaviour data to personalise content or advertisements. If such systems involve the profiling or monitoring of minors, businesses may face heightened scrutiny under the DPDP Act, particularly in light of the restrictions on behavioural monitoring and targeted advertising directed at children.

For organisations operating digital products and online services, understanding the scope of children’s personal data is a critical first step toward building an effective DPDP Act compliance framework. Businesses should review their privacy policies, cookie practices, third-party integrations, and data processing activities to ensure that children’s personal data is identified and handled in accordance with applicable legal requirements.

VERIFIABLE PARENTAL CONSENT UNDER THE DPDP ACT

One of the most important compliance obligations introduced under the Digital Personal Data Protection Act, 2023 (“DPDP Act”) is the requirement to obtain verifiable parental consent before processing a child’s personal data. Under Section 9 of the DPDP Act, organisations acting as “Data Fiduciaries” must obtain consent from the parent or lawful guardian prior to collecting, storing, sharing, or otherwise processing personal data relating to a child.

The requirement for “verifiable” parental consent is particularly significant because it goes beyond obtaining ordinary user consent through standard click-wrap mechanisms or self-declarations. Organisations must implement reasonable measures to verify that the consent has genuinely been provided by a parent or lawful guardian and not by the child themselves. Although the DPDP Act and associated rules are still evolving, businesses should proactively adopt robust consent verification mechanisms to mitigate regulatory and compliance risks.

In practice, verifiable parental consent mechanisms may include:

1. OTP or email-based parent verification systems;

2. Government ID or KYC-based verification;

3. Parent-linked mobile authentication;

4. Credit/debit card verification methods;

5. Video-based verification processes;

6. Signed consent declarations from parents or guardians;

7. School-authorised consent structures for educational platforms.

The nature of the verification mechanism should generally be proportionate to the sensitivity of the data being processed and the risks associated with the processing activity. For example, platforms processing educational records, health-related data, biometric information, or location tracking data involving minors may require stronger verification measures than platforms collecting limited non-sensitive information.

The parental consent requirement has major operational implications for businesses operating digital platforms in India. Many existing registration and onboarding workflows are not designed to identify minors or validate parental authorisation. As a result, organisations may need to redesign user interfaces, update consent management systems, modify privacy notices, and implement age-gating technologies to ensure compliance with the DPDP Act.

The compliance burden is particularly relevant for sectors such as EdTech, gaming, social media, OTT streaming, healthcare, and mobile applications where children frequently access digital services. Businesses relying on targeted advertising models, engagement-based algorithms, or behavioural analytics may also need to reassess their processing activities to ensure that child users are not inadvertently subjected to restricted profiling or monitoring practices.

Importantly, organisations should maintain proper records of parental consent to demonstrate compliance in the event of regulatory inquiries or investigations. Maintaining audit trails, consent logs, timestamps, and verification records can form a critical part of a defensible DPDP compliance framework.

Globally, parental consent obligations have become a central component of children’s privacy regulation. Similar obligations exist under frameworks such as the Children’s Online Privacy Protection Act (COPPA) in the United States and the General Data Protection Regulation (GDPR) in the European Union. Businesses operating across jurisdictions should therefore align their consent practices with international privacy standards while accounting for India’s broader age threshold under the DPDP Act.

RESTRICTIONS ON TRACKING, BEHAVIOURAL MONITORING, AND TARGETED ADVERTISING

The Digital Personal Data Protection Act, 2023 (“DPDP Act”) introduces some of India’s most significant child privacy protections by imposing restrictions on tracking, behavioural monitoring, and targeted advertising directed at children. These restrictions reflect growing global concerns regarding the impact of algorithmic profiling, addictive platform design, and digital advertising practices on minors.

Under Section 9 of the DPDP Act, Data Fiduciaries are prohibited from undertaking processing activities that are likely to cause harm to a child. The law specifically restricts the tracking or behavioural monitoring of children and the use of targeted advertising directed at minors.

These restrictions have wide-ranging implications for digital businesses that rely on user analytics, personalised recommendations, engagement algorithms, advertising technologies, cookies, software development kits (SDKs), and profiling tools. Many online platforms routinely collect behavioural data such as browsing activity, watch history, search patterns, geolocation information, click behaviour, and app engagement metrics to optimise user experiences and deliver personalised content or advertisements. However, where such processing involves children, these practices may fall within the scope of restricted activities under the DPDP Act.

Behavioural monitoring generally refers to the tracking and analysis of an individual’s online activity, preferences, habits, interactions, or digital behaviour patterns over time. This may include:

1. tracking browsing behaviour across websites or applications;

2. analysing search history and engagement patterns;

3. monitoring screen time or user interactions;

4. profiling users based on interests or consumption habits;

5. collecting location or movement data;

6. creating predictive behavioural profiles through AI or algorithmic systems.

Similarly, targeted advertising involves displaying advertisements based on a user’s behaviour, interests, demographics, online activity, or profiling data. Many ad-tech ecosystems depend heavily on such profiling mechanisms to maximise advertising engagement and revenue generation. Under the DPDP Act, businesses processing children’s data must carefully evaluate whether their advertising and analytics frameworks involve prohibited profiling or targeting practices.

These obligations are particularly relevant for sectors such as:

a. social media platforms;

b. gaming applications;

c. video streaming and OTT services;

d. EdTech platforms;

e. mobile applications;

f. e-commerce websites;

g. online communities and content-sharing platforms.

Businesses should also pay close attention to the use of third-party advertising trackers, analytics integrations, cookies, pixels, and embedded SDKs within their digital products. Even where tracking technologies are implemented by external vendors, the organisation may still bear responsibility as a Data Fiduciary under the DPDP Act.

The restrictions on behavioural monitoring and targeted advertising align with broader international regulatory developments focused on child online safety. Regulatory authorities globally have increasingly scrutinised digital business models that monetise children’s attention, engagement, or behavioural data. For example, the UK Information Commissioner’s Office (“ICO”) introduced the Age Appropriate Design Code to establish child-centric privacy standards for online services, while the European Union and United States have also strengthened regulatory oversight of child-targeted advertising practices.

To mitigate compliance risks, organisations should adopt a privacy-by-design approach and implement child-safe defaults across their digital platforms. This may include disabling personalised advertising for minors, limiting tracking technologies, reducing unnecessary data collection, and ensuring that platform design does not encourage excessive engagement or exploit children’s vulnerabilities. Businesses should also conduct regular privacy audits and vendor reviews to identify whether any analytics or advertising tools process children’s data in a manner that could conflict with the DPDP Act.

IMPACT ON BUSINESSES HANDLING CHILDREN’S DATA

The children’s data protection obligations introduced under the Digital Personal Data Protection Act, 2023 (“DPDP Act”) are expected to significantly impact businesses operating digital platforms and technology-driven services in India. Organisations can no longer treat children’s privacy compliance as a niche or sector-specific issue. Any business whose products or services are likely to be accessed by individuals below 18 years of age may potentially fall within the scope of the child data protection framework under the DPDP Act.

These compliance obligations are particularly relevant for industries such as:

a. EdTech and online learning platforms;

b. Gaming and entertainment applications;

c. Social media and community platforms;

d. E-commerce and online marketplaces;

e. Healthcare and wellness applications;

f. OTT streaming services;

g. FinTech and payment applications;

h. Mobile applications and SaaS platforms;

i. Educational institutions and online tutoring services.

One of the most immediate operational challenges for businesses will involve implementing reliable age verification and parental consent mechanisms. Many digital platforms currently rely on basic self-declaration systems where users merely confirm that they meet the minimum age requirement. However, under the DPDP Act, organisations handling children’s personal data may need to adopt more robust verification systems to ensure that verifiable parental consent is obtained where required.

Businesses may therefore need to redesign several aspects of their digital infrastructure and user experience, including:

1. onboarding and registration workflows;

2. consent management systems;

3. cookie and tracking practices;

4. advertising and analytics frameworks;

5. privacy notices and disclosures;

6. user account controls and safety settings;

7. vendor and third-party data sharing arrangements.

The restrictions on behavioural monitoring and targeted advertising may also disrupt existing ad-tech and engagement-based business models. Companies that rely heavily on personalised advertising, recommendation engines, user profiling, or engagement analytics may need to reassess how their systems interact with child users. In certain cases, businesses may need to disable personalised advertising features for minors altogether or implement separate compliance workflows for child users.

EdTech platforms are likely to face heightened scrutiny under the DPDP framework given the volume and sensitivity of children’s personal data they routinely process. Educational technology companies often collect attendance records, academic performance data, behavioural insights, voice recordings, device usage information, and interaction analytics. Similarly, gaming and social media platforms frequently process behavioural and engagement data that may fall within the scope of restricted processing activities under the DPDP Act.

The compliance impact also extends to third-party service providers and vendors. Organisations should carefully review contracts and data-sharing arrangements involving analytics providers, cloud vendors, advertising networks, CRM systems, SDK providers, and marketing partners to ensure that children’s personal data is processed in accordance with statutory obligations. Vendor due diligence and contractual safeguards will therefore become increasingly important components of DPDP compliance programs.

From a governance perspective, businesses should integrate child privacy considerations into their broader data protection and cybersecurity frameworks. This may involve conducting data mapping exercises, privacy impact assessments, internal audits, and risk assessments specifically focused on children’s data processing activities. Organisations should also ensure that internal teams including product, engineering, marketing, and compliance functions understand the legal restrictions associated with children’s data under Indian privacy law.

Globally, regulators are increasingly moving toward stricter online safety and child privacy standards. Businesses operating internationally should therefore align their compliance frameworks with emerging global best practices such as the UK ICO Age Appropriate Design Code and the UNICEF Policy Guidance on Children’s Data Governance to strengthen child-centric privacy governance.

DPDP ACT COMPLIANCE CHECKLIST FOR BUSINESSES HANDLING CHILDREN’S DATA

Businesses processing children’s personal data under the Digital Personal Data Protection Act, 2023 (“DPDP Act”) should implement robust child privacy compliance measures to reduce regulatory and reputational risks. Given the enhanced protections applicable to minors, organisations should adopt child-specific data protection frameworks across their digital platforms and internal systems.

1. Implement Age Verification Mechanisms

Businesses should adopt reasonable age verification systems to identify users below 18 years of age. Depending on the nature of the platform and the sensitivity of the data processed, this may include OTP verification, parent-linked authentication, KYC-based verification, or AI-assisted age estimation tools.

2. Obtain Verifiable Parental Consent

Under the DPDP Act, organisations must obtain verifiable parental consent before processing children’s personal data. Businesses should maintain proper consent logs, audit trails, and records of consent collection and withdrawal to demonstrate compliance.

3. Review Tracking and Advertising Practices

Companies should assess whether their websites or applications use cookies, trackers, SDKs, or advertising technologies that may involve behavioural monitoring or targeted advertising directed at children. Businesses should consider disabling personalised advertising and profiling features for minors.

Businesses may also refer to the UK ICO Age Appropriate Design Code for child-centric digital privacy standards.

4. Use Child-Friendly Privacy Notices

Privacy notices and consent requests should be written in clear and accessible language so that both children and parents can understand how personal data is collected, used, shared, and retained.

5. Conduct Data Mapping and Risk Assessments

Businesses should identify all categories of children’s personal data processed across their systems, platforms, analytics tools, and third-party integrations. Organisations should also conduct periodic privacy and risk assessments for high-risk processing activities.

6. Strengthen Data Security Safeguards

Companies handling children’s data should implement strong cybersecurity measures such as encryption, access controls, secure storage practices, and breach response procedures to protect children’s personal information.

Businesses may also refer to the CERT-In Cyber Security Guidelines for cybersecurity and data protection guidance.

7. Review Vendor and Third-Party Agreements

Organisations should review contracts with cloud providers, analytics vendors, advertising partners, and third-party service providers to ensure that children’s personal data is processed in compliance with the DPDP Act.

8. Establish Internal Privacy Governance

Businesses should implement internal privacy policies, employee training programs, and child data handling procedures to strengthen overall DPDP Act compliance and child privacy governance.

PENALTIES AND REGULATORY RISKS FOR NON-COMPLIANCE

Non-compliance with children’s data protection obligations under the Digital Personal Data Protection Act, 2023 (“DPDP Act”) may expose businesses to significant financial penalties, regulatory scrutiny, and reputational damage. The DPDP Act empowers the Data Protection Board of India to investigate instances of non-compliance and impose monetary penalties depending on the nature, severity, and duration of the violation.

Businesses handling children’s personal data may face increased regulatory risk where they fail to:

a. obtain verifiable parental consent;

b. implement adequate security safeguards;

c. prevent unlawful tracking or behavioural monitoring of children;

d. restrict targeted advertising directed at minors;

e. maintain appropriate consent and compliance records.

Apart from financial exposure, regulatory investigations involving children’s data can also result in operational disruption, customer distrust, negative publicity, and heightened scrutiny from investors, business partners, and regulators. This is particularly relevant for digital businesses operating in sectors such as EdTech, gaming, social media, healthcare, and e-commerce where large volumes of children’s personal data may be processed.

The DPDP Act also reflects a broader global regulatory trend toward stronger child privacy enforcement. Regulators worldwide are increasingly scrutinising digital platforms that monetise children’s attention, behavioural data, or online activity through profiling and targeted advertising practices.

To mitigate legal and reputational risks, organisations should proactively implement child-specific privacy safeguards, strengthen internal governance frameworks, and regularly review their data processing activities involving minors.

BEST PRACTICES FOR PROTECTING CHILDREN’S PERSONAL DATA

Businesses processing children’s personal data should adopt a privacy-by-design approach and integrate child safety considerations into their digital products, platforms, and internal governance frameworks. As regulatory scrutiny around children’s online privacy continues to increase, organisations should move beyond minimum legal compliance and implement proactive safeguards to reduce legal, operational, and reputational risks.

One of the most important best practices is data minimisation. Businesses should only collect children’s personal data that is necessary for a legitimate and clearly defined purpose. Excessive collection of behavioural, location, biometric, or engagement-related data involving minors should be avoided unless strictly required.

Organisations should also adopt child-friendly privacy practices by using clear and accessible privacy notices, transparent consent mechanisms, and default privacy settings that prioritise child safety. Platforms targeting or likely to be accessed by minors should avoid manipulative interface designs, addictive engagement mechanisms, or dark patterns that may exploit children’s vulnerabilities.

From a cybersecurity perspective, businesses should implement strong technical safeguards such as encryption, access controls, secure authentication systems, and periodic security audits to protect children’s personal information from unauthorised access, misuse, or data breaches.

Companies should further conduct regular vendor due diligence and assess whether third-party advertising, analytics, or tracking tools process children’s data in a manner consistent with the DPDP Act. Internal employee training, privacy governance frameworks, and periodic compliance reviews can also play a critical role in strengthening child data protection practices.

FREQUENTLY ASKED QUESTIONS (FAQS)

1. What is the age of a child under the DPDP Act, 2023?

Under the Digital Personal Data Protection Act, 2023 (“DPDP Act”), a child means any individual below 18 years of age. This is broader than several international privacy laws such as the GDPR and COPPA, which prescribe lower age thresholds for digital consent.

2. Is parental consent mandatory for processing children’s personal data under the DPDP Act?

Yes. The DPDP Act requires Data Fiduciaries to obtain verifiable parental consent before processing a child’s personal data. Businesses must implement reasonable mechanisms to verify that consent has genuinely been provided by the parent or lawful guardian.

3. Does the DPDP Act prohibit targeted advertising directed at children?

Yes. The DPDP Act restricts tracking, behavioural monitoring, and targeted advertising directed at children. Businesses using personalised advertising, analytics tools, cookies, or profiling technologies should carefully review whether such practices involve children’s personal data.

4. Which businesses are most affected by children’s data protection obligations under the DPDP Act?

Industries such as EdTech, gaming, social media, OTT platforms, healthcare, e-commerce, and mobile applications are likely to face heightened compliance obligations because they frequently process or interact with children’s personal data.

5. What compliance measures should businesses adopt for children’s data protection?

Businesses should implement age verification systems, verifiable parental consent mechanisms, child-friendly privacy notices, strong cybersecurity safeguards, restricted profiling practices, and vendor compliance reviews to align with the DPDP Act’s children’s data protection requirements.

RELATED BLOGS

3. A DPDP Compliance Checklist for references:

https://solvlegal.com/resources/dpdp-act-2023-compliance-checklist-india/

CONCLUSION

The Digital Personal Data Protection Act, 2023 (“DPDP Act”) marks a significant shift in India’s approach toward children’s online privacy and digital safety. By imposing enhanced obligations relating to verifiable parental consent, behavioural monitoring, tracking, and targeted advertising, the DPDP Act places greater responsibility on businesses handling children’s personal data across digital platforms and online services.

As children increasingly engage with online learning platforms, gaming applications, social media, e-commerce websites, and connected technologies, organisations must adopt stronger child privacy governance frameworks and proactive compliance measures. Businesses can no longer rely on generic privacy practices and should instead implement child-specific safeguards such as age verification mechanisms, parental consent workflows, child-friendly privacy notices, restricted profiling practices, and enhanced cybersecurity controls.

The DPDP Act also reflects a broader global movement toward stricter regulation of digital platforms processing children’s data. Businesses operating internationally should therefore align their compliance programs with emerging global child privacy standards and privacy-by-design principles to minimise legal, operational, and reputational risks.

Organisations handling children’s personal data should proactively review their data collection practices, advertising models, third-party integrations, and internal governance structures to ensure compliance with India’s evolving data protection framework. Early adoption of robust child privacy practices will not only help mitigate regulatory exposure but also strengthen consumer trust and long-term digital governance.

ABOUT AUTHOR

This blog was written by Yashvardhan Singh, a legal professional focusing on legal research, contract analysis, and regulatory compliance. He works closely with corporate and technology-driven legal frameworks, with particular exposure to data protection, commercial documentation, and legal process optimisation. His work supports businesses in strengthening compliance structures and ensuring legally sound operations.

DISCLAIMER

The information provided in this article is for general educational purposes and does not constitute a legal advice. Readers are encouraged to seek professional counsel before acting on any information herein. SolvLegal and the author disclaim any liability arising from reliance on this content.

.jpeg)